Jan Blommaert

“From Adam Smith in 1776 to Irving Fisher in 1930, economists were thinking about intertemporal choice with Humans in plain sight. Econs began to creep in around the time of Fisher, as he started on the theory of how Econs should behave. But it fell to a twenty-two-year-old Paul Samuelson, then in graduate school, to finish the job”. (Richard H. Thaler, Misbehaving: The Making of Behavioral Economics, p.89; New York: Norton, 2015).

Richard Thaler, in this wonderful book, uses the terms “Humans” and “Econs” to distinguish between, respectively, real people observed in real life, having real interests, attitudes and modes of thought and behavior that are often, let us say, suboptimal; “Econs”, by contrast, are fictional characters, ideal people who don’t have passions or biases, are always rational, possess a maximum of information and are able to convert this linearly into economic behavior. Thaler’s book is a powerful argument in favor of an Economics science that keeps track of, and explains, Human behavior as, at least, a qualification to the kinds of fictional predictions of Econs’ behavior that are the Economics mainstream’s occupation.

In so doing, Thaler also directs our attention towards the small historical window in which this current mainstream’s doctrine occurred and flourished. For almost two centuries, Economics was precoccupied with real markets, customers, prices and policies – Adam Smith’s Theory of Moral Sentiments setting the scene for an Economics that dealt with the whims of human social behavior. The discipline abandoned this focus about merely half a century ago, when Samuelson, Arrow and some others replaced muddled descriptions of reality by elegant mathematical “models”, supposed to be of absolute and eternal precision and capable of bypassing the uncertainties and historical situatedness of real human minds. When critics pointed towards such minds (and their tendency to violate the rules of such elegant models), the response was that, willingly or not, people in economic activities would behave “as if” they had done the intricate calculations captured in the models. Thaler’s book is a lengthy and pretty detailed refutation of that “as if” argument: if nobody really actually operates in the ways laid down in mathematical models, why not take such deviations – “misbehaving” Humans – seriously? For someone such as myself, involved in ethnographic studies of Humans and their social behavior, this question is compelling and the arguments it provokes inescapable.

Thaler is not a nobody in his field – he’s the 2015 President of the American Economics Association; he will be able to ask this question urbi et orbi and with a stentorian voice. There might be some obstacles, though. Interestingly, the kinds of Economics designed by Samuelson and his comrades were (and are) seen as truly “scientific”. The conversion of a science grounded in observations of actually occurring behavior to a science concerned with abstract mathematical modeling was seen as the moment at which Economics became a real science, a complex of knowledge practices not tainted by the fuzziness of actual social facts but aiming at absolute Truth – something invariably expressed not in prose but in graphics, tables and figures, in which a new abstract model could be seen as a major scientific breakthrough (just look at the list of Economics Nobel Prize winners since the 1960s, and read the citations for their selection). As for the teaching and training of aspiring economists, it was thought that they would now be truly “scientific”, since students would learn abstract and ideal frameworks suggested to be absolutely generative in the sense that any form of real behavior could be measured against it and explained in its terms. No more nonsense, no more description – a normative theory such as that of Samuelson (sketching how ideal people should act) would henceforth be presented as a descriptive one (effectively documenting and explaining how they actually act) as well – an absolute theory, in other words. The shift away from “realism” – the aim of descriptive theories – towards ideal-typical modeling – the aim of normative theories – was seen as irrelevant. Economics became “scientific” as soon as it abandoned realism as an ambition.

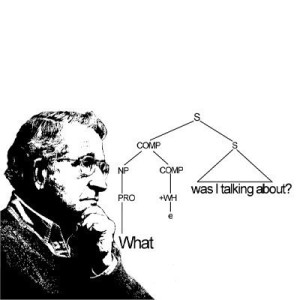

It is interesting to see that in post-World War II academics, similar moves were made in different disciplines. Chomsky’s revolution in Linguistics (caused by his Syntactic Structures, 1957) is an example. Whereas Linguistics until Chomsky was largely driven by descriptive aims and methods (go out and describe a real language), in which careful empirical description and comparison would ultimately lead to adequate generalization (Saussure’s Langue), Chomsky saw real Human language as propelled by an abstract formal and generative competence, describable as a finite set of abstract rules capable of generating every possible sentence in a language. This, too, was seen at the time as a major leap towards scientific maturity, and senior philosophers of science (already accustomed to see formalisms such as mathematical logic as the purest forms of meaning) argued that, with Chomsky, Linguistics had finally become a “science”. Linguists, from now on, would no longer do fieldwork – the interest in listening to what real people actually said was disqualified – but rely on “introspection”: one’s own linguistic intuitions were good enough as a base for doing “scientific” Linguistics. It took half a century of sociolinguistics to replace this withdrawal from realism with a renewed attention for actual variation and diversity in real language. Contemporary sociolinguistics, consequently, operates towards Linguistics very much like Thaler’s Behavioral Economics towards mainstream Economics: as a sustained attempt at making this “science” realistic again.

Similar stories can be told with respect to disciplines such as psychology and sociology, and later cognitive science, where the desire to become “scientific”, in the same era, led to a canonical “science” in which white-room experiments and quantifiable surveys replaced actual observation of situated social behavior and attention to what people really did and say about themselves and society.

There, too, the assumptions were the same: the actual social behavior of people is driven by a “deeper” abstract level of psychological, social and cognitive processes which can be captured and tested by detaching individuals from their real-life environments, submitting them to testing procedures that bear no connection whatsoever with any other actual form of social and meaningful behavior. Thus, cognitive, psychic and emotional behavior can be accurately and “scientifically” studied by putting individuals into an MRI scanner, where they stay entirely immobile and cut off from any outside stimulus for 45 minutes. The outcomes of such procedures (quite paradoxically called “empirical” by practitioners) are presented, remarkably (or better, incredibly), as accurate accounts of real, situated and contextually sensitive social and mental activity. Abstract modeling of what we could call “Psychons”, here as well, is not seen as a normative enterprise but as a descriptive one as well, predicting (with various degrees of accuracy) Human behavior. This study of Psychons, then, is the real “science”, often rhetorically opposed to and contrasted with the “storytelling” or “journalism” of research grounded in actual observation and description of Humans (turning one of the 20th century’s most influential intellectuals, Sigmund Freud, into a fiction writer). Senior sociologists and psychologists such as Herbert Blumer and Aaron Cicourel brought powerful (and never effectively refuted) methodological arguments against this shift away from realism and towards “science” – their arguments were dismissed as unhelpful.

So here we are: knowledge disciplines concerned with Man and society appear to be “scientific” only when they deliberately reject the challenge of realism – “reality talking back”, as Herbert Blumer famously called it – and engage in abstract formalization and modeling, regardless of whether or not such formal schemes and models stand the test of empirical reality checks. Such “science”, because it dismisses this kind of systematic reality check, also becomes incapable of describing change. Experiments need to be “repeatable” in order to be “scientific”, and consequently we continuously check and test things that have to remain stable in order to be scientifically testable. The fact that actual social processes and realities are not “experiments”, and display a strong tendency to change perpetually, precludes repeatability and consequently can never be “scientifically” addressed. This feature – the bias towards stability and the incapability of addressing change – is a constant in all these “sciences”. And those who practice such “sciences” are actually proud of it. Strange, isn’t it?

We live by our mythologies, Roland Barthes famously said. One of the mythologies we live by is that of “science” being necessarily, because of its own criteria for validity, unrealistic, and therefore often outlandish and outrageous in its findings and conclusions. It would be good, therefore, to return to the old debates historically accompanying the shifts in the disciplines I mentioned here, and carefully examine the validity of critical arguments brought against these kinds of “science”. To the extent that people still believe that “re-search” means “looking again”, i.e. to be continuously critical of one’s own knowledge doctrines, this would be an eminently scientific practice.

PS (2017): Richard Thaler was awarded the Economics Nobel Prize in October 2017.